August 30, 2021

Data Transformations with Databricks

DURATION

10mincategories

Tags

share

Introduction

The following data transformations are supported in Azure Databricks through data flows:

Joining

Parsing

Pivoting

Ranking

Filtering

To start with, let us create a storage account.

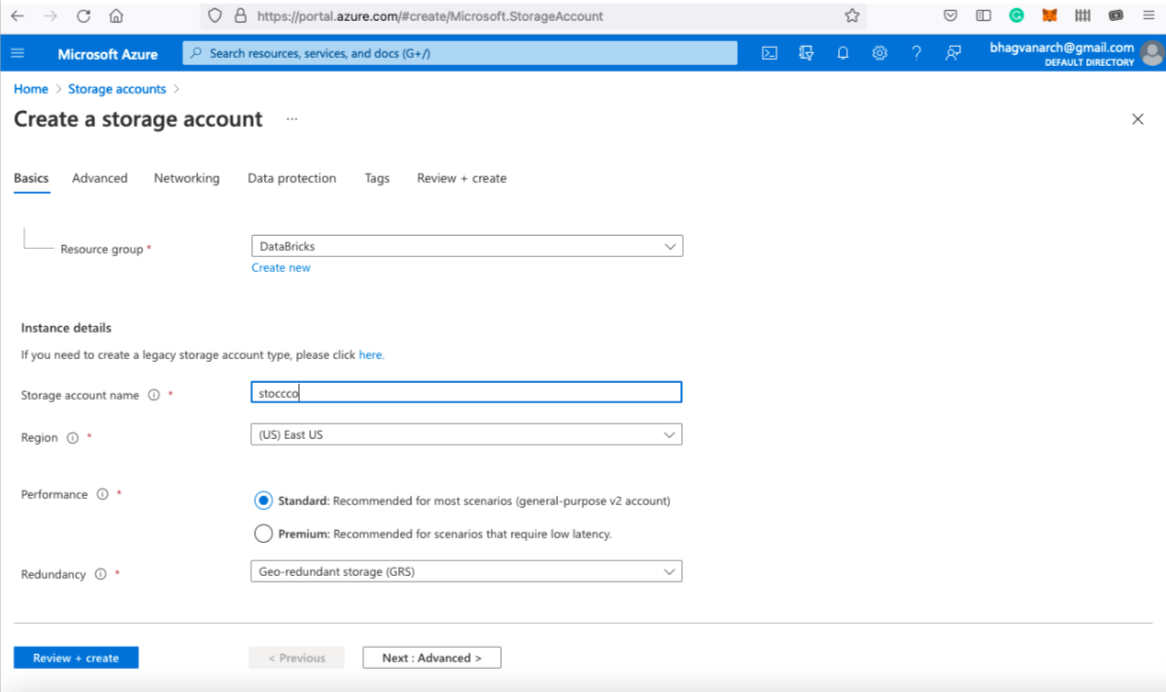

Creation of Storage Account

To begin, you need a storage account on Azure Databricks. You can create a storage account as shown below:

You can input the storage account name. You need to deselect the advanced options like “Enable blog public access”.

You need to select the cool access tier as shown below:

Click next and review the networking options as shown below:

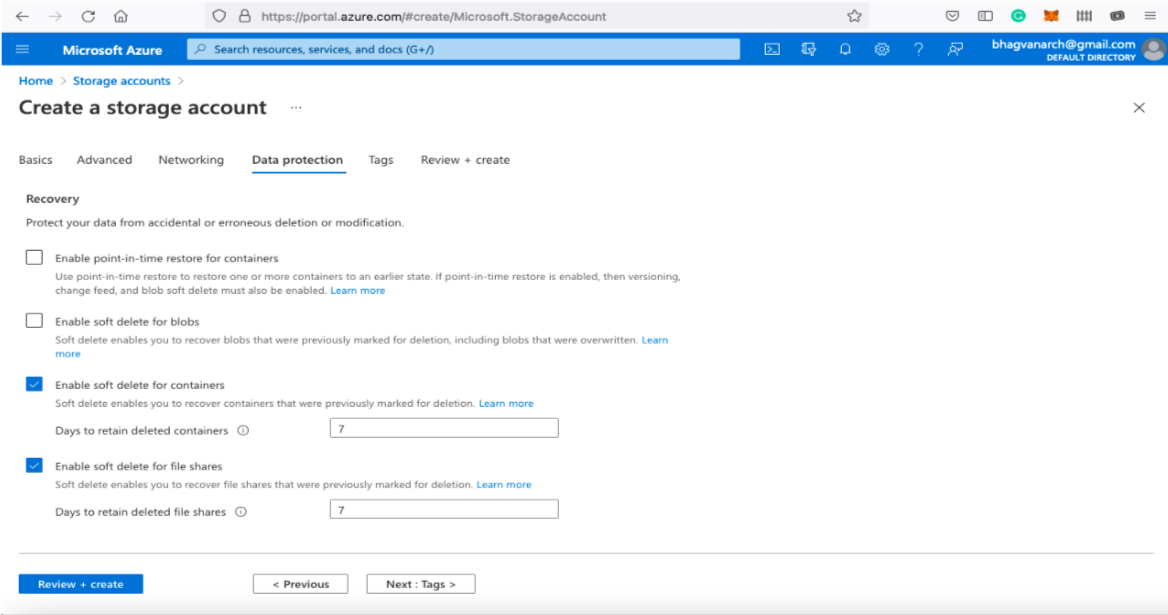

Click next and deselect the data partition options such as “Enable soft delete for blobs”.

Now you can review and start creating the storage account.

You can check the configuration before the creation of a storage account.

Creation of a Storage Container

Now you can create a storage container in the storage account, as shown below.

You can click on the create button and create a new container with a name. After creating, you can upload a blob by adding a file named “Products.csv”.

Click on the upload button to create a blob in the storage container.

You can see the created blob in the storage container as shown below.

You can preview the csv file as shown below:

Now let us look at how to create a data factory.

Creation of a Data Factory

You can create a data factory by clicking on create a data factory. After creating a data factory, you can click on orchestrate as shown below:

Creation of Data Flow

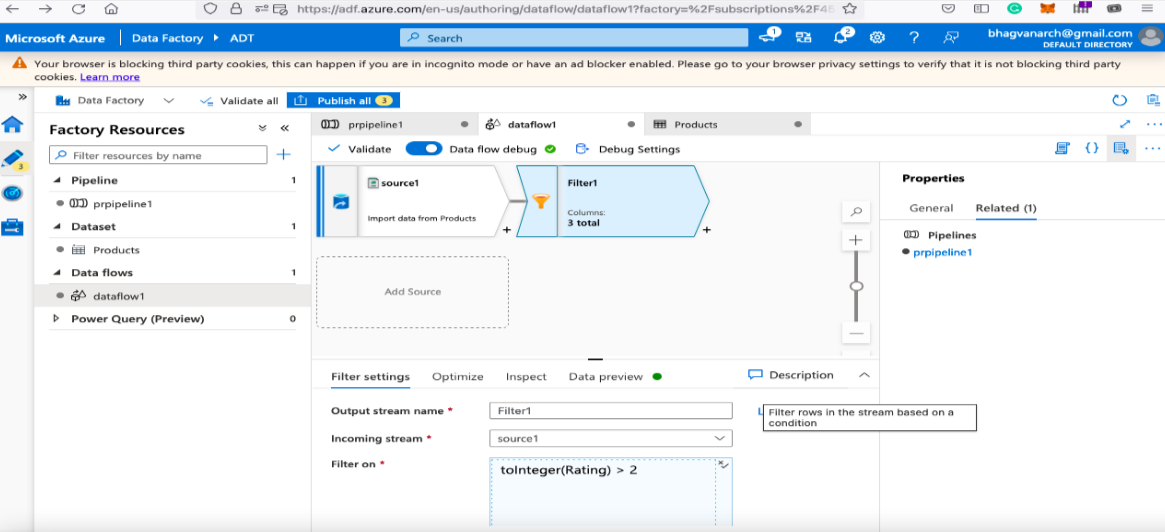

You can create a data flow in the data factory orchestrate option.

You can name the data flow as shown above. Click on add source and create a new dataset as shown below.

You can name the dataset and create a new linked service as shown below:

You can select Azure Data Lake Storage Gen2 as the service type and dataset as CSV.

You can test the connection after setting the subscription type.

After the testing is successful you can browse the storage container and the blob for the dataset.

You can select the file products.csv as shown below:

You can name the dataset as shown below:

You can check the “First row as header” as shown below.

You can now check the file schema as shown below:

You can add filters to the data flow as shown below:

You can type in the boolean expression for “products having a rating greater than two”.

You can preview the data after filtering as shown below:

Now that we have almost created the data transformation first cut, let us finish the pipeline by adding the sink.

Creation of Pipeline

In the pipeline shown below, properties for the pipeline are set with default values. Source data set is created by using the blob in the storage container. The pipeline can be completed by adding the sink as shown below.

Sink can be added with a new dataset by clicking on the new button.

You can select the new dataset type as Azure Data Lake Storage Gen2 as shown below:

You can set the format of the dataset as CSV.

The pipeline and the data flow is now complete.

Data Validation

Data flow is validated and published as shown above. Validation looks for dataset reference, linked service, data source definition, and sink in the data flow configuration.

Dataset needs to have minimum size configured or childitems property set; otherwise, minimum size default is zero. The time out value needs to be specified, otherwise the default is seven days. Sleep value can be set for validation attempts; otherwise the default is ten seconds.

Copying Data

You can have data copied from the in-house hosted data store to a cloud-based data source. Azure Databricks-based Azure Data Factory can be used for data copying and transformations. The data can be analyzed for BI and reporting on the cloud. Copying of data might involve the following operations:

Serialization

Deserialization

Compression

Decompression

Column Mapping.

Notebook Triggers

You can have notebook triggers for data transformations. They help in adding the data to the data source or Azure Analytics. Notebook can be part of a pipeline in the data flow. The execution of the notebook can be triggered by a notebook trigger.

In the next part of the series, we will look at Azure Databricks ETL.

References:

Azure Databricks Platform: https://docs.microsoft.com/en-us/azure/databricks/