March 31, 2026

AI Reviewers – Member Help Guide

DURATION

5 mincategories

Tags

share

1. Introduction

We are introducing AI Reviewers at Topcoder for development challenges. You can see them in the Review App for future challenges. If you are not familiar with the Review App, check out this article.

AI Reviewers introduce an automated pre-screening step into the challenge workflow, helping assess submissions early by providing scores, feedback, and quality signals. This enables competitors to improve their submissions during the submission phase and helps streamline what progresses to manual review.

While AI influences the flow of submissions, human oversight remains in place through reviewers and copilots, ensuring that decisions can be reviewed and adjusted where needed.

2. What This Means for Each User

AI Reviewers introduce new interactions across the challenge workflow.

Let’s look at what this means for each user type.

2.1 For Competitors (Submitters)

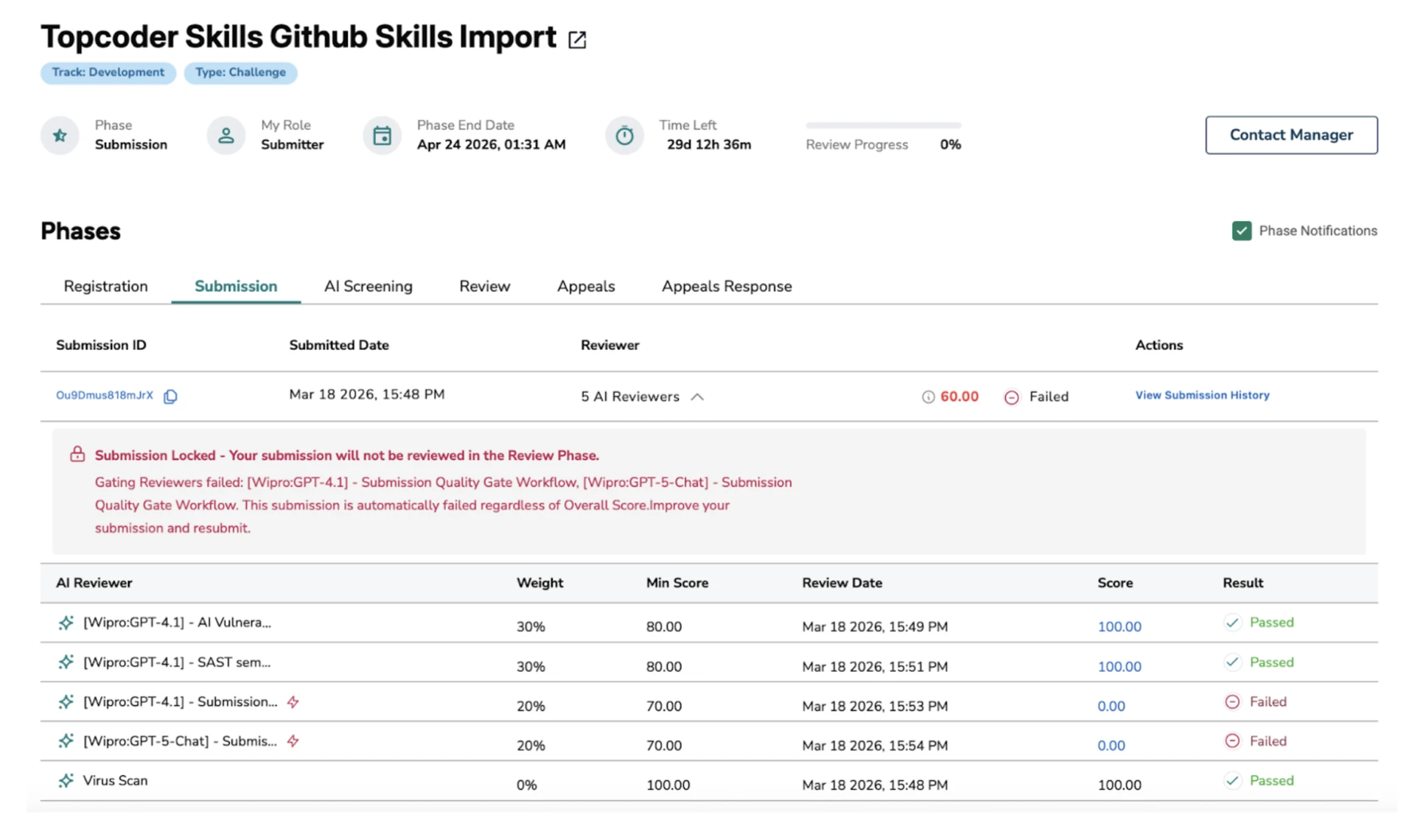

After you submit, your submission is queued for AI Review. Results are generated shortly after submission, though timing may vary depending on system load.

What You Will See

AI Review status (e.g., pending, completed)

Once completed:

Aggregated Score (determines Pass / Fail)

Pass / Fail status

Individual scores from one or more AI workflows

Individual weights for each AI workflows

Review dates for each AI workflows

AI Review Summary View

In the summary view, you can:

See the aggregated score and final status (Pass/Fail)

View scores from individual AI workflows

Hover over the info icon next to the aggregated score to understand how it is calculated (it uses the weight of each AI workflow)

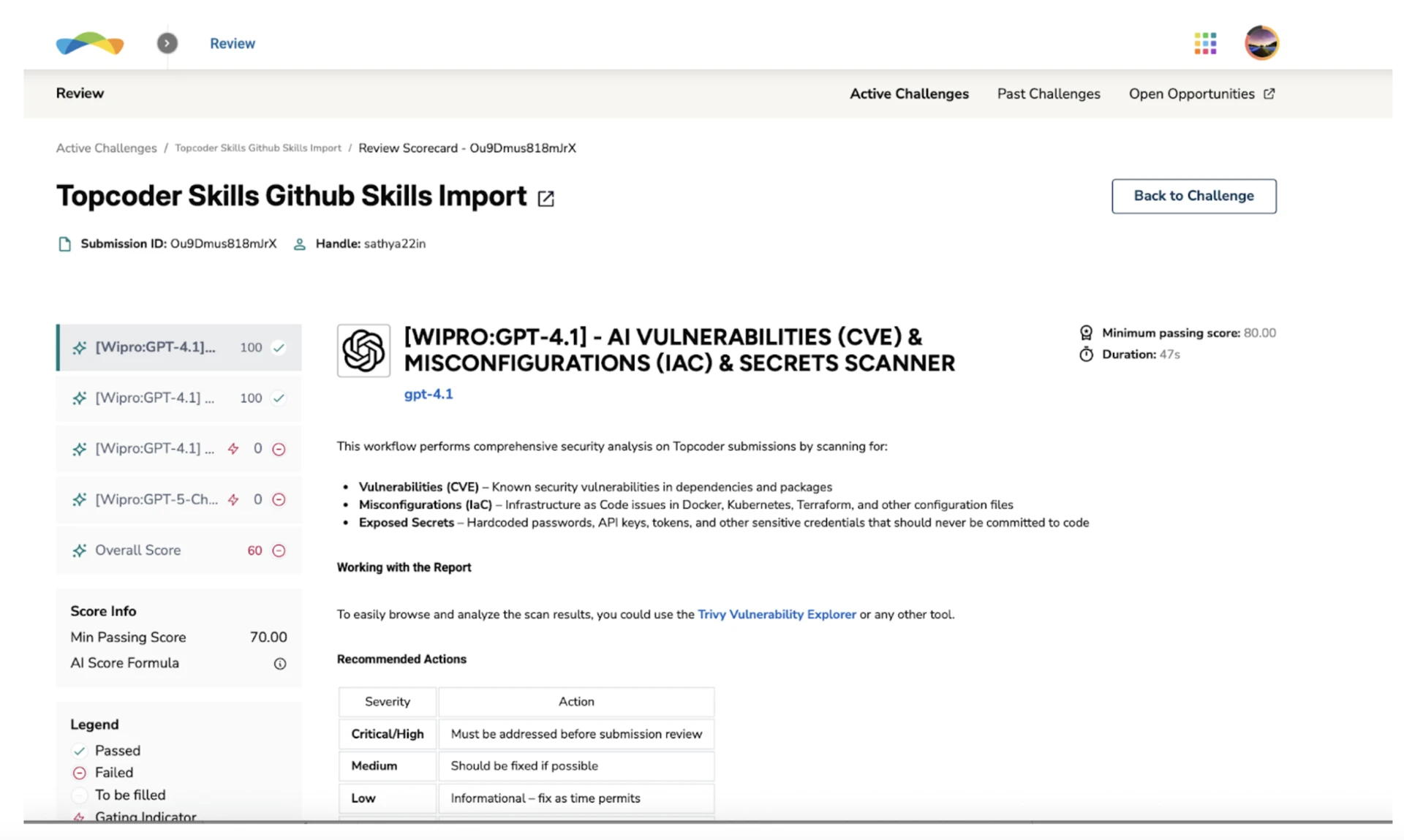

Some AI Workflows will be gated (marked with the thunder icon), meaning that if that review doesn’t pass minimum score, the entire submission will fail, regardless of the overall AI Workflow score. They are critical for your submission to pass review.

Note: You can only appeal to a submission that was failed by a human review, not by an AI Workflow.

Detailed Feedback View

By clicking on an individual workflow score, you can:

View detailed feedback and comments

Understand specific issues or improvement areas identified by that workflow

What You Should Do

Review the AI feedback carefully

Use the insights to improve your submission

Important

If your submission is marked as Fail, it will not move to the manual review phase (the standard review conducted by human reviewers).

However, you can update and resubmit as long as the submission phase is still open

Even if your submission Passes, you can continue to refine and resubmit for a better outcome

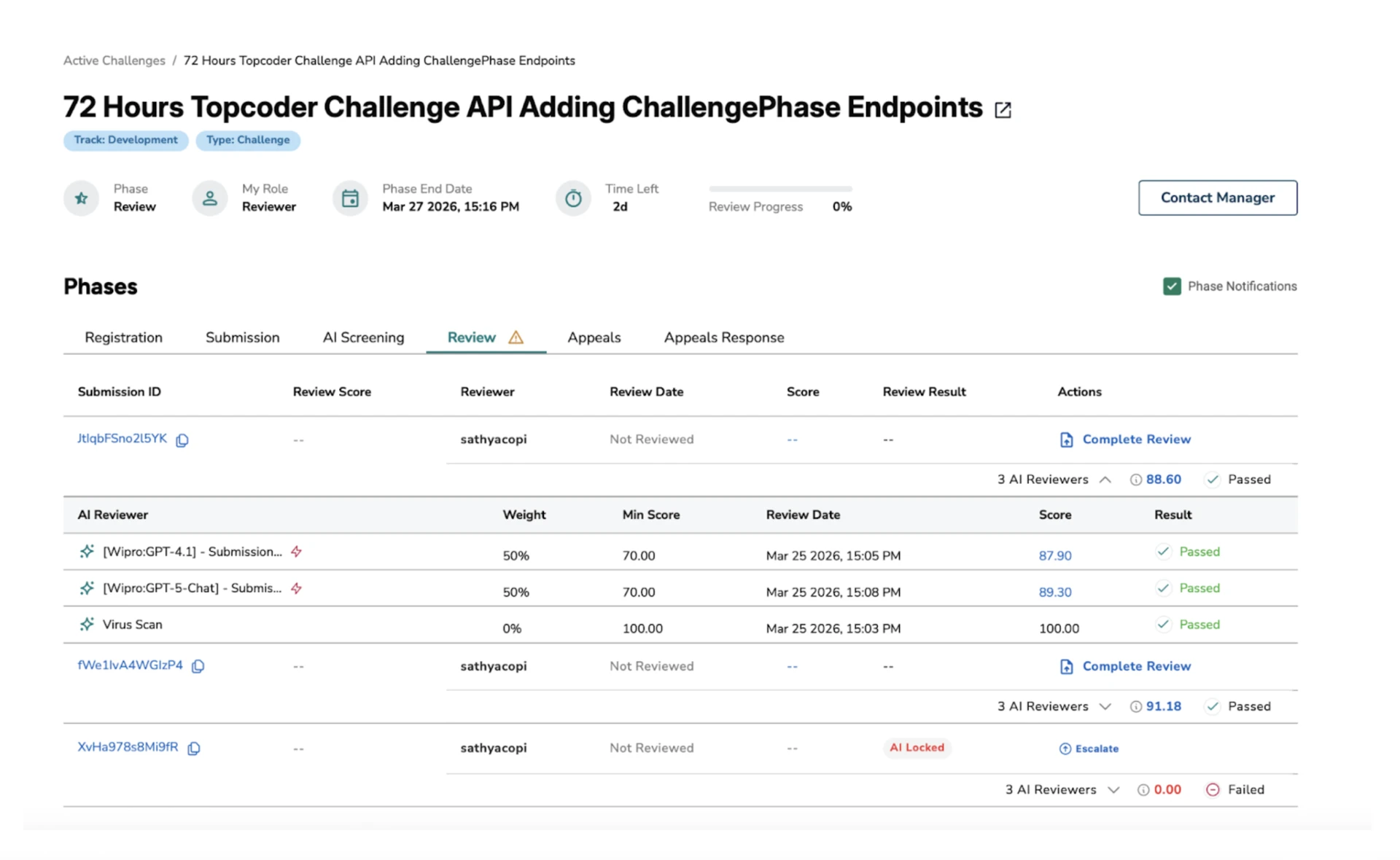

2.2 For Reviewers

During the review phase, AI Review results are available to help you prioritize and evaluate submissions.

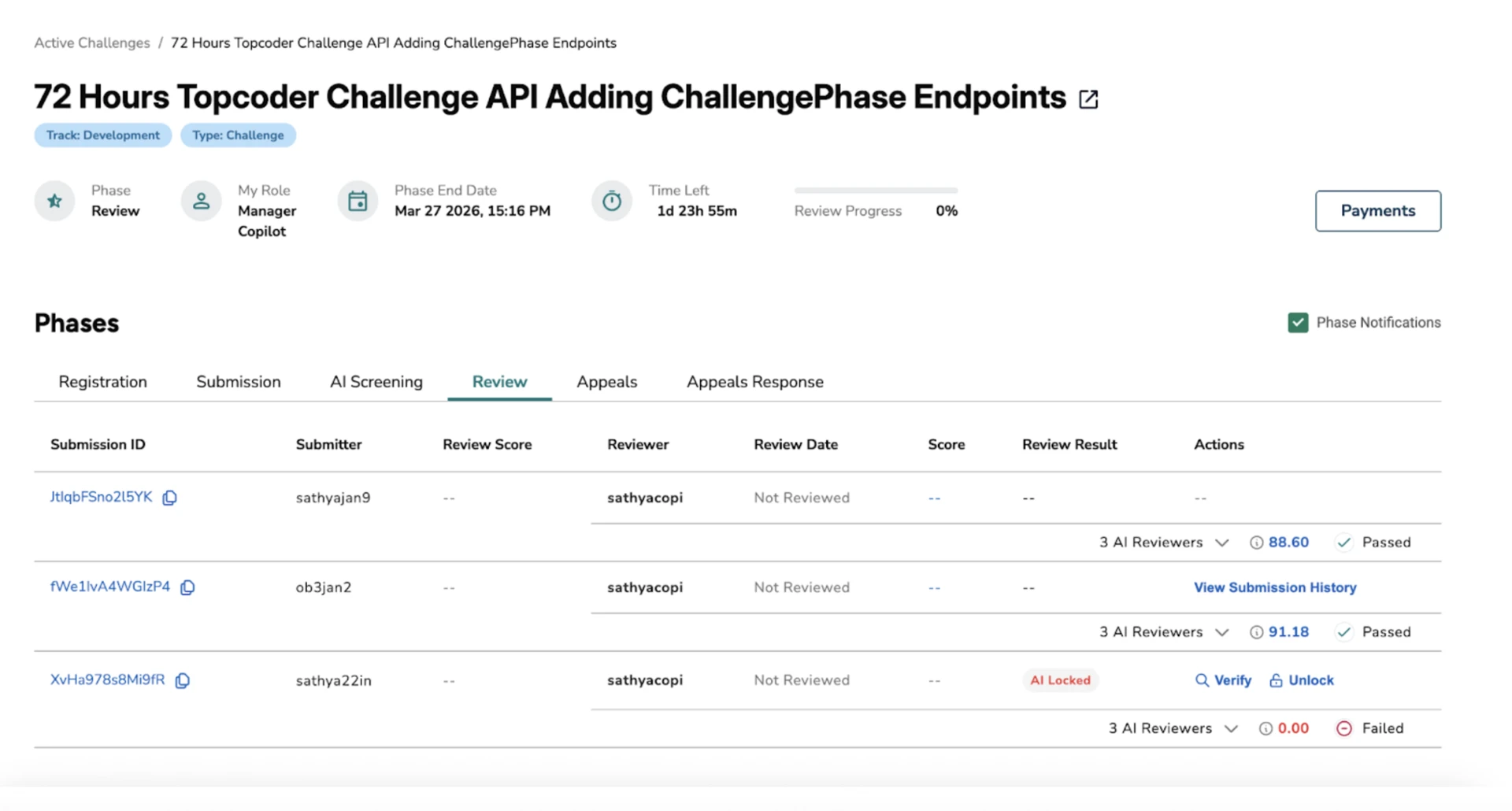

What You Will See

Submissions that have passed AI Review and are available for manual review

Submissions that are locked due to failing AI Review and are not available for manual review

For each submission:

AI Review aggregated score and Pass/Fail status

AI workflow-level scores

Detailed AI feedback

Reviewing AI-Passed Submissions

For submissions that have passed AI Review:

You can proceed with normal manual evaluation

AI feedback is available to help guide your review

What You Should Do

Use AI feedback as a starting point, not a conclusion

Perform a complete manual assessment

Assign scores based on your judgment

Important: Please evaluate each submission using your own expertise and judgment—without relying on external AI tools. You are fully responsible for ensuring the quality of the outcomes in this challenge.

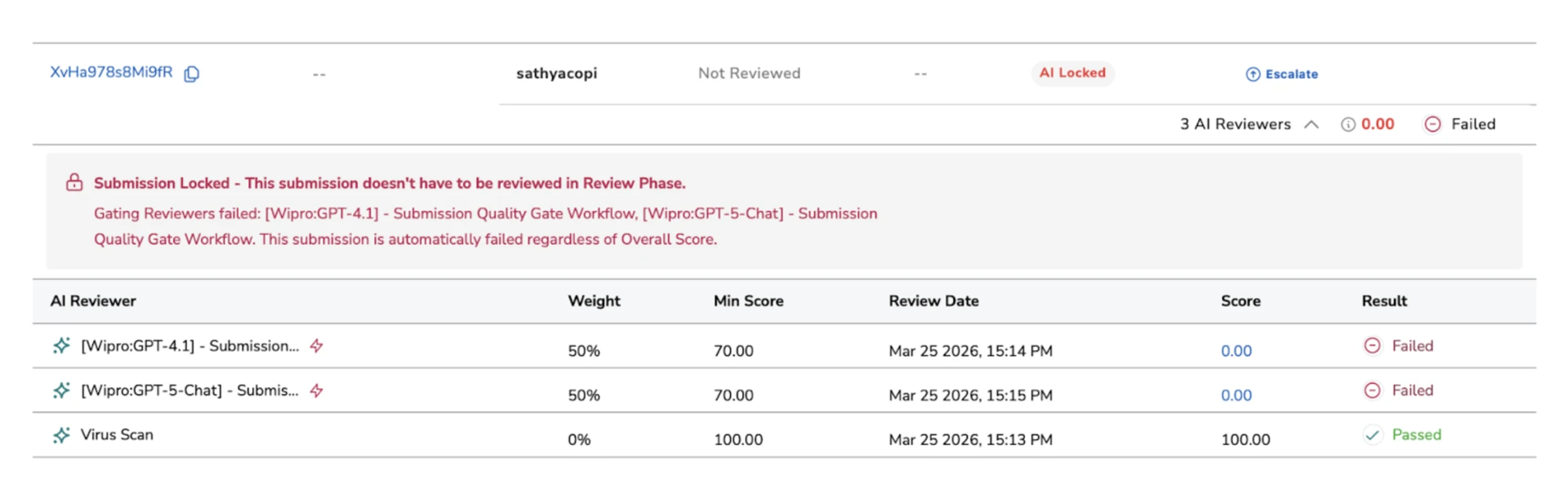

Handling Locked Submissions

Submissions that fail AI Review are locked and do not move to manual review by default. However, as a reviewer, you can escalate a locked submission if you believe it deserves further evaluation.

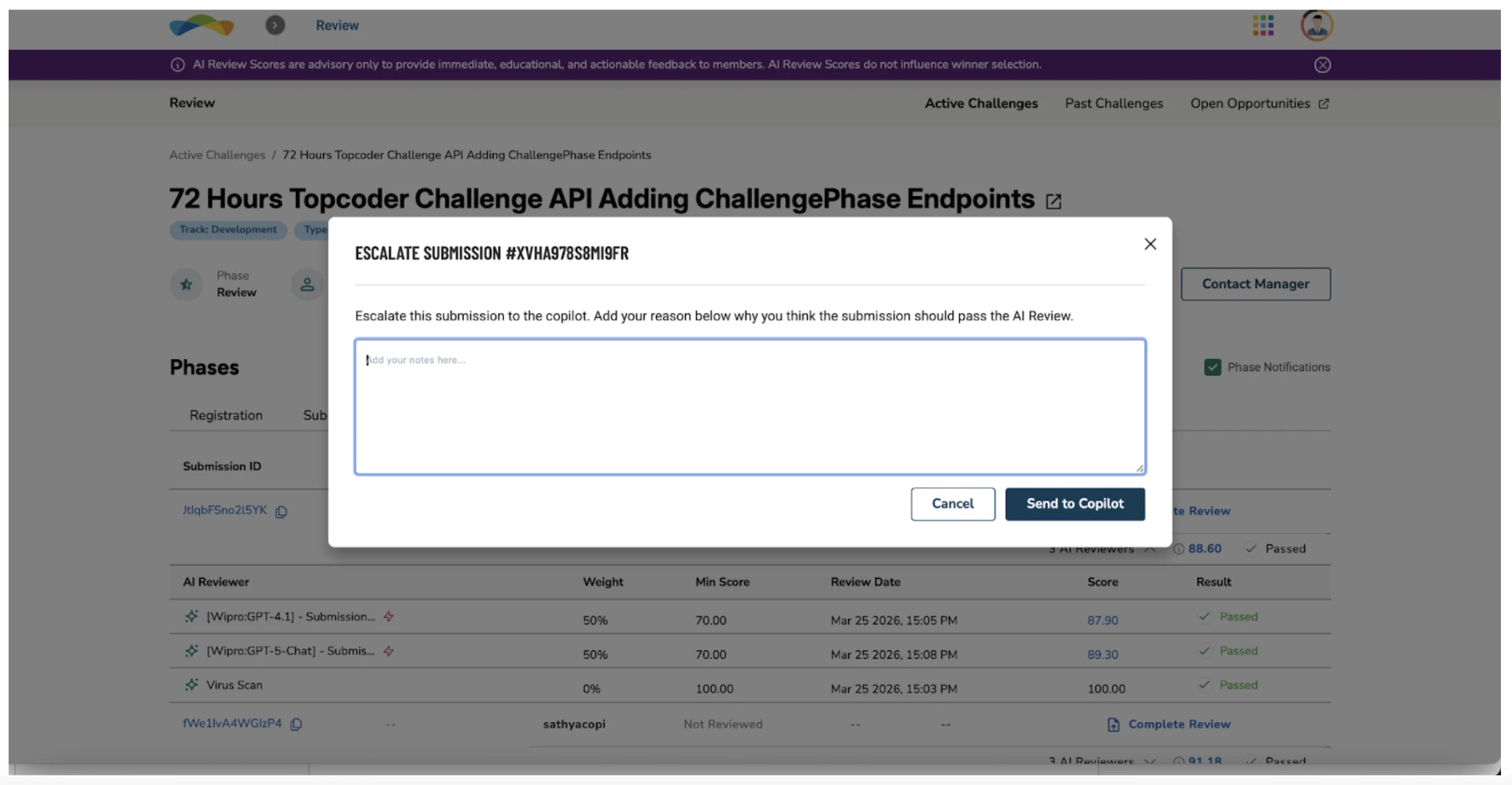

Escalation Flow

For locked submissions, you can initiate an escalation by clicking the escalate icon next to the submission.

When you escalate a locked submission:

The request is sent to the copilot for review

The copilot will:

Approve → Submission is unlocked and becomes available for manual review

Reject → Submission remains locked

If a locked submission is unlocked, all reviewers are notified via email, ensuring it is picked up for manual evaluation.

What You Should Do

Focus primarily on AI-passed submissions for evaluation

Review locked submissions selectively

Escalate only when:

The AI decision appears incorrect

The submission shows potential despite failing

2.3 For Copilots

During the review phase, copilots have oversight of AI-reviewed submissions and control over which locked submissions can proceed to manual review.

What You Will See

Submissions that pass AI Review and human review

Submissions that are locked due to failing AI Review

Escalation requests raised by reviewers if any

For each submission:

AI Review aggregated score and Pass/Fail status

AI workflow-level scores

Detailed AI feedback (via drill-down)

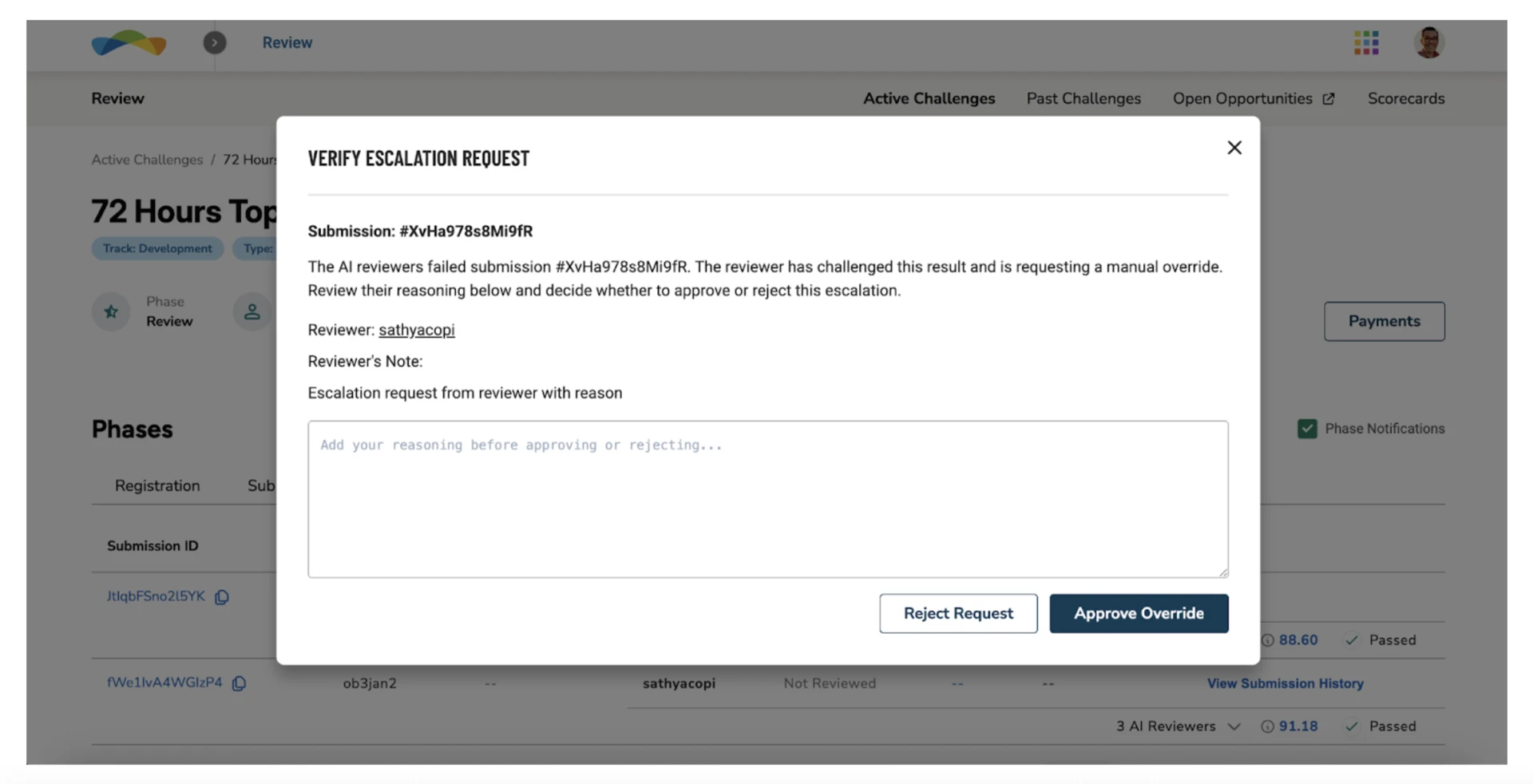

Reviewing Escalations

Escalated submissions are indicated by a verify icon next to the locked submission.

Click the verify icon to view the escalation request

Review the submission details and AI feedback

When a reviewer escalates a locked submission, you can:

Approve → Submission is unlocked, and all the reviewers will get an email notification and then submission moves to manual review

Reject → Submission remains locked

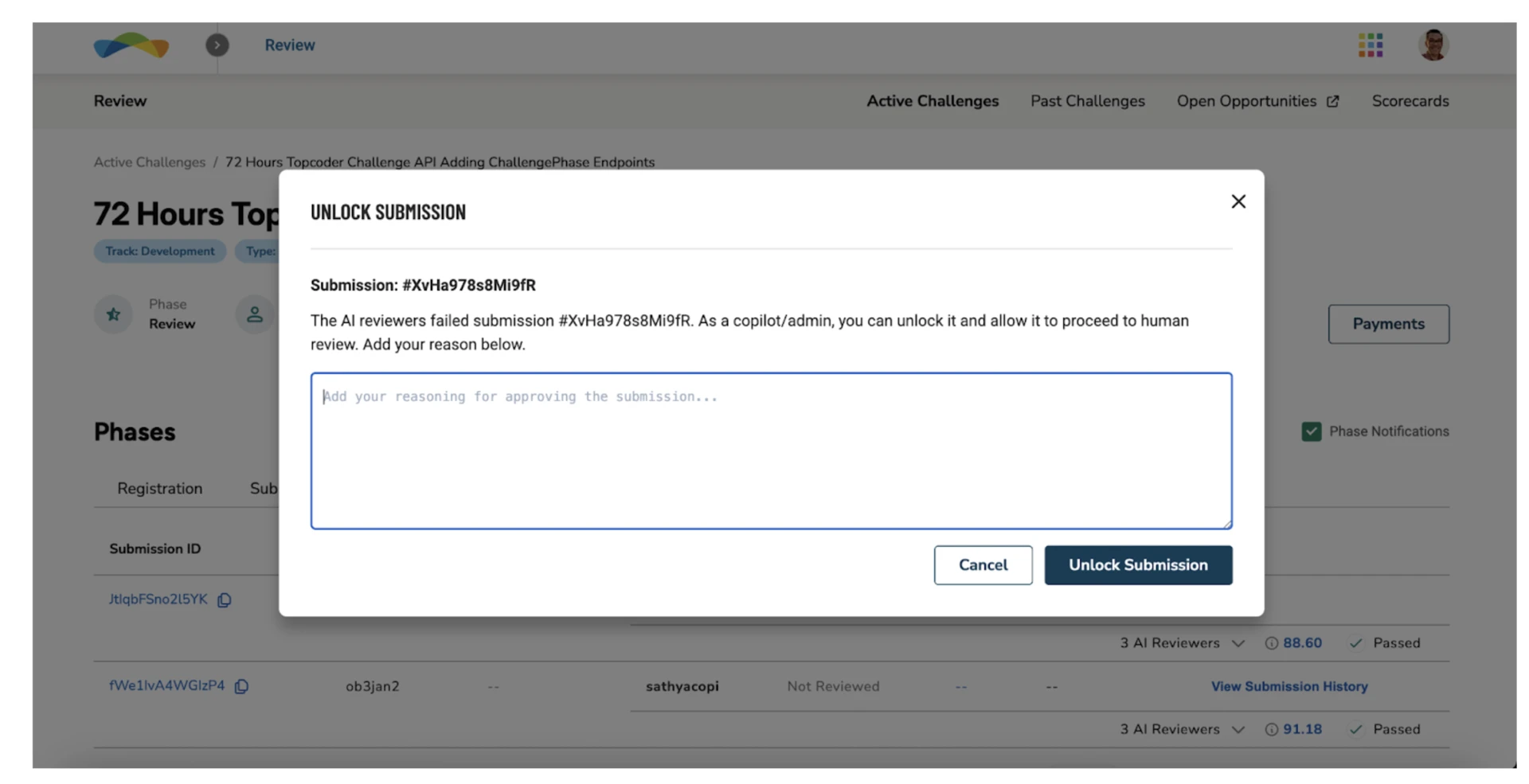

Directly Unlocking Submissions

In addition to handling escalations, you can directly unlock submissions.

Click the unlock icon next to a locked submission to unlock it

The submission will then move to the manual review phase

This allows you to act proactively if you identify submissions that should be reviewed further.

What You Should Do

Review escalations carefully before approving

Use AI feedback as supporting input, not the sole decision factor

Unlock submissions when:

The AI decision appears incorrect

The submission shows sufficient merit for manual evaluation

Important: Please evaluate each submission using your own expertise and judgment - without relying on external AI tools.